Artificial intelligence is growing at a rapid pace, and cloud platforms must keep up with this demand. Google Cloud is taking a strong step forward by working closely with Intel to build faster and more efficient AI systems. This collaboration is helping improve performance while keeping infrastructure simple and scalable.

The Need for Faster AI Scaling

As more businesses adopt AI, the need for powerful and reliable infrastructure is increasing. AI workloads are complex and require systems that can manage large amounts of data quickly. Scaling these systems is not easy, especially when performance and cost both matter.

Google Cloud is addressing this challenge by using Intel technology to create balanced systems that can handle growing workloads without slowing down.

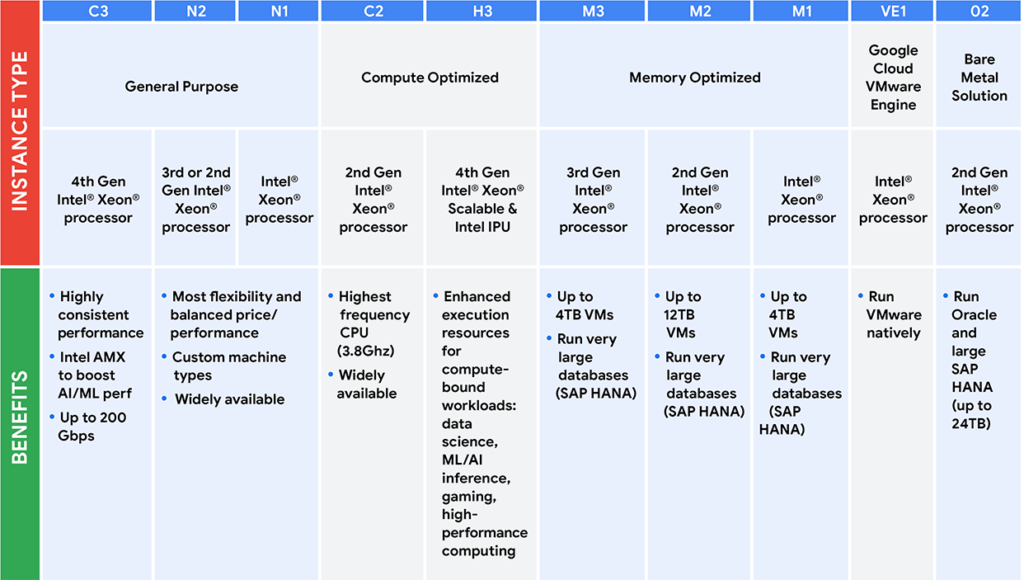

Role of Intel Xeon in Google Cloud

Intel Xeon processors are at the core of Google Cloud’s infrastructure. These processors are designed to handle multiple tasks at the same time, making them ideal for AI workloads.

They help in:

- Managing data processing efficiently

- Coordinating AI training tasks

- Supporting real-time inference

- Running general-purpose applications

Google Cloud uses the latest Xeon processors in its C4 and N4 instances, ensuring strong and stable performance across different use cases.

How IPUs Improve System Efficiency

In addition to CPUs, Google Cloud is also using Infrastructure Processing Units (IPUs). These are custom-built components that handle specific data center tasks.

IPUs focus on:

- Network management

- Storage operations

- Security functions

By shifting these tasks away from CPUs, IPUs allow the system to run more efficiently. This leads to better performance and reduced workload pressure on the main processors.

Comparison: Traditional Setup vs Intel-Optimized Infrastructure

| Feature | Traditional Cloud Setup | Intel-Optimized Setup |

|---|---|---|

| Workload Handling | CPU-heavy | Balanced (CPU + IPU) |

| Performance Efficiency | Moderate | High |

| Resource Utilization | Limited | Optimized |

| Scalability | Complex | Easier and flexible |

| System Stability | Less predictable | More consistent |

This optimized approach helps Google Cloud deliver better results for AI applications.

Faster and Smarter AI Performance

With Intel technology, Google Cloud can handle both large-scale AI training and real-time inference more effectively. The combination of Xeon processors and IPUs ensures that workloads are distributed properly.

This results in:

- Faster processing speeds

- Better system reliability

- Lower operational costs

- Improved user experience

These benefits are important for businesses that rely on AI for daily operations.

Building Scalable AI Infrastructure

One of the main goals of this collaboration is to make AI infrastructure easier to scale. Instead of adding more complex systems, Google Cloud is improving how existing components work together.

By using a mix of general-purpose and specialized hardware, the platform becomes more flexible. This allows businesses to grow their AI capabilities without worrying about system limitations.

Conclusion

Google Cloud is scaling AI faster by using Intel technology in a smart and balanced way. Intel Xeon processors and IPUs work together to create systems that are efficient, powerful, and easy to scale.

This approach is helping Google Cloud meet the rising demand for AI while keeping performance high and costs under control. As AI continues to evolve, such innovations will play a key role in shaping the future of cloud computing.